AI’s impact on our world is undeniable. Everywhere you look, from chatbots to self-driving cars, AI is shaping the present and, undeniably, the future.

The year 2023 has been nothing short of thrilling in the AI space, especially when it comes to predictions and declarations.

Let’s dive deep into the whirlwind of AI announcements and what the experts are saying about AGI (Artificial General Intelligence) timelines.

A Glimpse of Predictions – How Far Can AI Go?

A decade ago, few could have predicted the rapid advancement of AI. Yet, Shane Leg, the co-founder of Google Deep Mind, did just that. Way back in 2009, he made a bold prediction that human-level AI could become a reality by 2025. Fast forward to 2023, and his expectation still holds with a 50% chance for 2028. Imagine an AI that’s less delusional, more factual, and updated in real-time. It’s like talking to a person, but it’s a machine!

But, Shane isn’t alone in his bold predictions. Sam Altman, from OpenAI, hints that AGI might be closer than we think. AGI, often termed as the “final form” of AI, is where machines can perform any intellectual task that humans can. So, when Sam mentions that their mission might be completed around 2030-2031, it sends ripples of excitement (and maybe a bit of fear) across the tech world.

AI’s Wild Predictions

Now, let’s address the elephant in the room.

Paul Cristiano, formerly with OpenAI, made a jaw-dropping prediction on the Dwares Patel podcast.

He stated there’s a 15% chance of an AI capable of constructing a Dyson Sphere by 2030, and 40% by 2040. For the uninitiated, a Dyson Sphere is a hypothetical megastructure that surrounds a star, capturing its energy. It’s sci-fi turned potential reality!

https://www.youtube.com/watch?v=9AAhTLa0dT0

All respective rights belong to Dwarkesh Patel YTchannel.

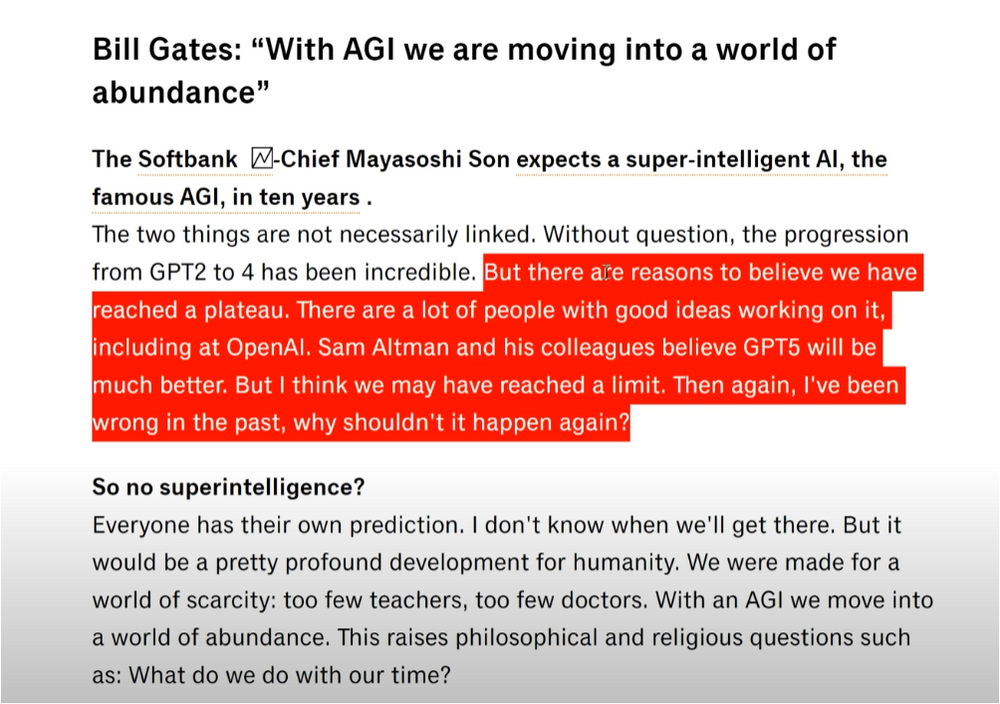

Bill Gates, the tech mogul, has his reservations. He feels that we might have hit a plateau in the progression from GPT-2 to GPT-4. But, as he admits, he could be wrong. The tech world is abuzz with speculation, and it’s this uncertainty that keeps it thrilling.

AGI and Safety

With great power comes great responsibility. The rapid advancements in AGI have not only led to marvel but also concern. An executive order from the White House has caused quite the stir. It touches upon monitoring the model weight, security, and safety of any AI model trained with immense computing power. It’s a move toward ensuring that as we race towards AGI, we don’t compromise on security.

Companies like Anthropic are setting safety benchmarks. They’ve committed not to deploy or scale any future model that poses risks like cybersecurity, bioterror, or nuclear threats until absolute safety can be guaranteed. It’s a commitment that underscores the need for balance in our pursuit of AGI.

AI Safety Summit, A Global Conversation

The AI Safety Summit in Bletchley brought together some of the biggest names in AI research and development. Here, numerous companies and nations came forward to set forth their declarations on AI’s scaling, safety, and deployment.

The AI safety summit in Bletchley was a beacon of hope. It saw representatives from major countries come together, acknowledging AI’s potential and the need for responsible scaling. OpenAI, for instance, reframed their approach as a “risk-informed development policy.” It’s a sign that there’s global consensus on moving forward responsibly.

Google DeepMind, a significant player, shares this sentiment. They’ve expressed their commitment to only proceed when the benefits substantially outweigh the risks. It’s a middle path that acknowledges the potential of AGI while also ensuring it’s not deployed recklessly.

The Bletchley Declaration was not just about individual companies. It was a global conversation. Even nations like China were invited, signaling a move towards international cooperation. The idea is clear: AI and AGI are not just the domain of a single nation or company; they are global phenomena that require global cooperation and guidelines.

The Implications of the Declarations

These declarations are more than just words; they set the course for the future of AI. They signal a collective acknowledgment of AI’s potential and the need for responsible and ethical development.

The emphasis on safety, especially from major players like OpenAI, Anthropic, and Google DeepMind, also suggests an industry that’s maturing. Gone are the days of unchecked development; the future will be about sustainable and safe AI growth.

Moreover, these declarations send a clear message to the public. As AI becomes an integral part of our lives, these commitments ensure that the technology we come to rely on is developed with our best interests in mind.

The Road Ahead

As we stand at the cusp of a new era in AI, it’s essential to take a moment and marvel at the journey so far. The year 2023 has been a testament to the incredible strides we’ve made in AI and AGI. From bold predictions to safety declarations, it’s clear that the world is preparing for an AI-driven future.

In the words of the person heading the safety summit, “There’s more agreement between key people on all sides than you’d think.” It’s this spirit of collaboration and unity that will guide the future of AGI. As we navigate the exciting road ahead, it’s essential always to remember the balance between advancement and safety.

The story of AI and AGI in 2023 is still being written. But one thing’s for sure: it’s a story filled with promise, potential, and a pinch of unpredictability. Here’s to the future!

Note: The views and opinions expressed by the author, or any people mentioned in this article, are for informational and educational purposes only, and they do not constitute financial, investment, or other advice.

Relevant Articles:

RT-X and the Dawn of Large Multimodal Models: Google Breakthrough

AGI Will Not Be A Chatbot: What Should You Expect

MidJourney AI’s Incredible New Feature: In-Painting Inside Discord