In the world of artificial intelligence, ,superintelligence is the ultimate frontier, the Holy Grail, the Mount Everest summit.

A time when ,AI systems will not just mimic human intelligence but surpass it.

Imagine an Artificial Intelligence that can outperform the best human brains in virtually every field, including scientific creativity, general wisdom, and social skills.

The debate now isn’t about whether this will happen, but rather when.

We are caught between the wildly varying predictions of imminent arrival within a year or two and the more conservative estimates of a couple of decades.

So, what’s the real deal? Let’s unpack these timelines.

The Conservative Estimate

,Mustafa Suleiman, the head of Inflection AI, places us a decade or two away from needing to worry about superintelligence. This is intriguing considering his company recently built the world’s second-highest performing supercomputer.

On the video below from 1:17 you can listen to Mustafa Suleiman claiming ‘We need AI to be held accountable.’ This video content was made by “Channel 4 News” and all rights belong to their respective owners.

https://www.youtube.com/watch?v=oAxNkehgzEU

The compute power of this beast is three times that used to train the mammoth GPT-4. So, why the cautious estimate? Perhaps it’s a case of under-promise and over-deliver. Or maybe, it’s the recognition that the journey to superintelligence isn’t linear and predictable.

It’s a complex interplay of advancements in compute power, algorithmic innovations, data availability, and perhaps more importantly, safety measures.

The More Ambitious Timelines

Then we have the more ambitious forecasts. Take Jacob Steinhardt of Berkeley, who projects, based on current scaling laws, that we could reach superintelligence by 2030.

That’s just six and a half years away!

What does this mean?

We’re talking about Artificially Intelligent systems capable of superhuman tasks like coding, hacking, mathematics, protein engineering, and performing millions of years of work in mere months.

The Boston Globe goes even further, hinting at a possible “runaway power” situation within two years, where AI might have so much power it can’t be pulled back.

This is a stark contrast to Suleiman’s more conservative view and perhaps a more cautionary tale of what could happen if we don’t keep tabs on AI advancements.

The OpenAI Proclamation

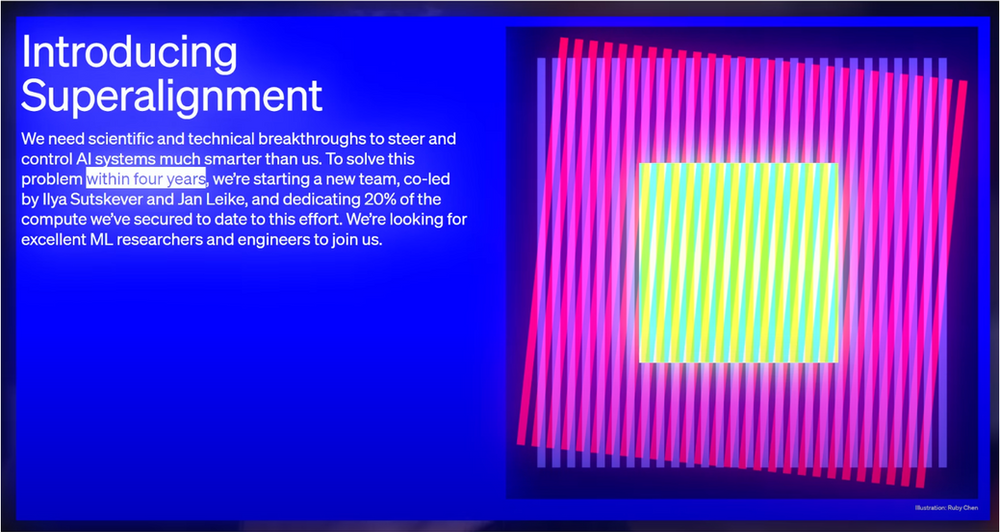

OpenAI, the renowned AI research lab, recently took the debate to a whole new level. In an unprecedented move, they announced a four-year timeline to make superintelligence safe.

Co-led by ,Ilya Satskova and Jan Leica, a new team has been set up, with a whopping 20% of the compute they’ve secured dedicated to this effort.

The declaration has drawn mixed reactions, with some lauding OpenAI’s commitment to accountability, and others, including the prediction markets, giving them only a 15% chance of succeeding.

The Roadblocks

While these timelines are fascinating, they aren’t without hurdles. Take the issue of “,jailbreaking” ,large language models, where AI systems can be manipulated to perform unintended tasks, possibly illegal or harmful ones. If unchecked, this could cause a significant setback in the race to superintelligence.

Legal and ethical considerations could also slow down the pace. ,Yuval Noah Harari, the renowned historian and author, recently called for sanctions, including prison sentences, for tech companies that fail to guard against the creation of fake humans.

The proliferation of such entities could lead to a collapse in public trust and democracy, posing a significant challenge to the development and deployment of superintelligent AI.

The Speed Boosters

On the flip side, a few factors could fast-track the timeline.

Military competition, for instance, has always been a potent catalyst for technological advancements. With AI becoming a critical component of modern warfare, militaries across the globe are investing heavily in its capabilities.

This could, in turn, expedite the development of superintelligence.

Economic automation is another powerful driver. As Artificial Intelligence grows more capable, businesses are compelled to delegate increasingly high-level decisions to AI to keep up with competitors. The shift could rapidly usher us into an era of superintelligent AI.

Balancing Development and Safety

In our rush to usher in the age of superintelligence, we must not forget about the importance of safety and ethical considerations.

OpenAI’s ambitious four-year plan isn’t just about developing superintelligence. It’s also about making it safe. As AI systems grow smarter and more powerful, they have the potential to be very dangerous.

They could lead to the disempowerment of humanity or, in a worst-case scenario, human extinction. It’s crucial that we invest in safety research and develop robust methods to align these systems with our values and goals.

The Potential of Superintelligence

When we talk about superintelligence, it’s easy to focus on the potential dangers.

However, it’s just as important to consider the incredible benefits it could bring.

Superintelligence could assist us solve some of the world’s most pressing problems, starting from climate change to different diseases. It could transform our economy, increase our productivity, and open up new avenues for scientific discovery. It could even help us explore the universe and answer some of our most profound questions.

Preparing for the Future

Despite the uncertainties, one thing is clear: We need to start preparing for the arrival of superintelligence.

This means investing in research and development, but also in education and public awareness. We need to have open and honest discussions about what superintelligence means for our society and how we can ensure it benefits all of humanity.

We also need to ensure that our legal and regulatory frameworks keep pace with technological advancements. As Yuval Noah Harari pointed out, the proliferation of fake humans could lead to a collapse in public trust. We need laws and regulations that can guard against these and other potential abuses of AI.

The Role of Collaboration

The development of superintelligence is a global challenge that requires a global response. It’s not something that any one company, country, or group of researchers can tackle alone.

We need to foster international collaboration and information sharing. We also need to engage a broad range of stakeholders, from scientists and policymakers to civil society and the general public.

Conclusion

The arrival of superintelligence isn’t a matter of if but when. It’s an eventuality that we must prepare for, whether it happens in one year, four years, six years, or twenty.

As AI continues to evolve, so should our understanding and preparation for the coming age of superintelligence. The sooner we align on terms, acknowledge the possibilities, and prepare for potential outcomes, the better equipped we’ll be when superintelligence becomes a reality.

Whatever the timeline, one thing is certain – superintelligence is coming, and it will be the most impactful technology humanity has ever invented. How we handle this transition will determine not just our future but possibly the very survival of our species. Let’s make sure we get it right.

Note: The views and opinions expressed by the author, or any people mentioned in this article, are for informational purposes only, and they do not constitute financial, investment, or other advice.

Relevant Articles:

Tree of Thoughts: Supercharging GPT-4 by 900%

Google Gemini: The Next Leap in AI Development

Phi-1: A ‘Textbook’ Model – Affecting AGI Timeline